When Coverage Meets Bug Hunting

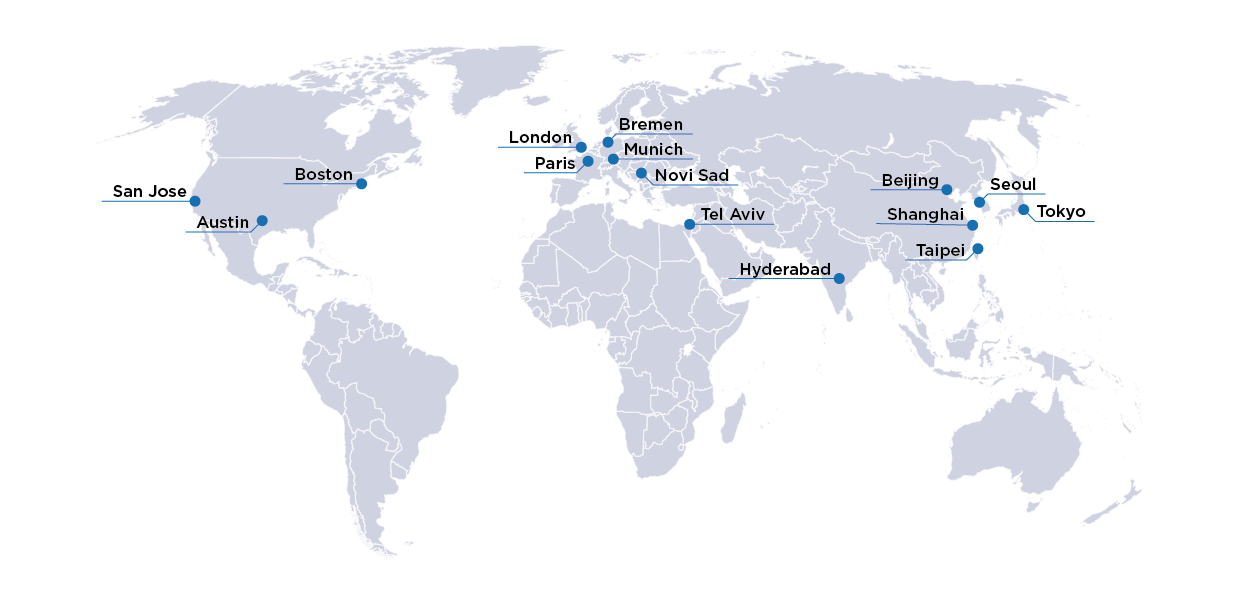

As chip design complexity continues to grow and the application of these chips diversifies from desktop and mobile platforms to automotive, avionics to IoT – this poses a unique mesh of challenges for validation and verification for safety, security, power and performance. The increased use of formal verification across the semiconductor industry over the last two decades is a testament to the fact that formal is about possibilities. Its application in safety verification, security verification, low-power validation and performance verification demonstrates the tremendous potential of the technology.

Formal’s main strength has been its use of mathematical solvers (sometimes called proof engines) which allow users to deduce exhaustive proofs as well hunt bugs. Whereas both these capabilities are equally important, deducing the point when verification can be concluded, or “signed-off,” remains a challenge. This is due the fact that full exhaustive proofs are not always adequate to deduce that one has completed the verification task, as verification is only as good as the constraints used. In the presence of these constraints, it’s perfectly possible to accidentally over-constrain an environment to deduce exhaustive proofs, providing an illusion that exhaustive verification has taken place.

So how does one detect an over-constraint in a test bench? How can one determine that the exhaustive proofs are of good quality and that there are no bugs in the design? How does one determine whether we have all the right checks for the design? What is the overhead to get to the, all-elusive, 100% coverage?

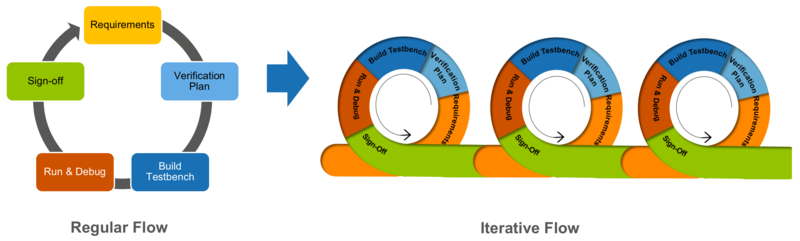

In this talk, I will provide answers to these questions and will show how OneSpin’s Quantify provides verification closure for assertion based formal verification. I will show, through an example, how Quantify’s metric driven coverage model can be used iteratively from the first hour of design bring up to complete sign-off in verification. Quantify provides assessment of verification quality by generating a single metric that covers both structural and functional coverage. The coverage report is meaningfully colour coded making it easy-to-read and is directly linked into the design browser, providing the DV engineer valuable visual feedback on what has (has not) been covered by the verification environment. This helps expose corner case bugs in design, detects over-constraining (bad constraints), and exposes missing checks. The DV engineer can add more checks, remove bad constraints and can find more bugs in the design – leading to an overall more comprehensive and rigorous test bench that is capable of finding bugs, and establishing conclusively that exhaustive proofs have not missed any bugs in the design. The example shown in this presentation works equally well for bounded proofs.

By using Quantify in the manner illustrated in the talk, both designers and verification engineers can get continuous feedback as they develop their assertion based verification test environments and gain knowledge of when they will be ready for sign-off.

If you want to learn more about our Quantify Coverage solution, you can download the technical data sheet here…